What are Developer metrics? Crafting the perfect Developer metrics can feel overwhelming, particularly when you're juggling daily responsibilities. That's why we've put together a collection of examples to spark your inspiration.

Copy these examples into your preferred app, or you can also use Tability to keep yourself accountable.

Find Developer metrics with AI While we have some examples available, it's likely that you'll have specific scenarios that aren't covered here. You can use our free AI metrics generator below to generate your own strategies.

Examples of Developer metrics and KPIs 1. Code Quality Assesses the readability, structure, and efficiency of the written code in HTML, CSS, and JavaScript

What good looks like for this metric: Clean, well-commented code with no linting errors

Ideas to improve this metric Utilise code linters and formatters Adopt a consistent coding style Refactor code regularly Practise writing clear comments Review code with peers 2. Page Load Time Measures the time it takes for a webpage to fully load in a browser

What good looks like for this metric: Less than 3 seconds

Ideas to improve this metric Minimise HTTP requests Optimise image sizes Use CSS and JS minification Leverage browser caching Use content delivery networks 3. Responsive Design Evaluates how well a website adapts to different screen sizes and devices

What good looks like for this metric: Seamless functionality across all devices

Ideas to improve this metric Use relative units like percentages Implement CSS media queries Test designs on multiple devices Adopt a mobile-first approach Utilise frameworks like Bootstrap 4. Cross-browser Compatibility Ensures a website functions correctly across different web browsers

What good looks like for this metric: Consistent experience on all major browsers

Ideas to improve this metric Test site on all major browsers Use browser-specific prefixes Avoid deprecated features Employ browser compatibility tools Regularly update code for latest standards 5. User Experience (UX) Measures how user-friendly and intuitive the interface is for users

What good looks like for this metric: High user satisfaction and easy navigation

Ideas to improve this metric Simplify navigation structures Ensure consistent design patterns Conduct user testing regularly Gather and implement user feedback Improve the accessibility of designs

← →

1. Team Velocity Measures the amount of work completed by a team during a sprint or iteration

What good looks like for this metric: Typically varies based on team size and complexity of tasks

Ideas to improve this metric Improve task estimation accuracy Use consistent metrics over time Remove bottlenecks in the process Enhance collaboration within the team Provide adequate resources and support 2. Code Quality Assesses the quality of code based on factors like complexity, maintainability, and bug count

What good looks like for this metric: Low defect rate and maintainable code structure

Ideas to improve this metric Implement code review practices Use automated testing tools Adopt coding standards Conduct regular code refactoring Encourage continuous learning and training 3. Lead Time The time taken from task assignment to its completion

What good looks like for this metric: Typically between 1-2 weeks for small to medium tasks

Ideas to improve this metric Prioritize tasks effectively Minimize task switching Improve process efficiency Streamline communication channels Utilize efficient project management tools 4. Employee Satisfaction Measures team members’ contentment and engagement at work

What good looks like for this metric: Aiming for a high satisfaction score on surveys

Ideas to improve this metric Regularly solicit feedback Provide career development opportunities Foster a positive work environment Offer competitive compensation and benefits Recognize and reward good performance 5. Sprint Goal Success Rate Percentage of sprints where the team achieves the sprint goal

What good looks like for this metric: 80-90% sprint goal success rate

Ideas to improve this metric Set clear and realistic goals Ensure goals align with broader objectives Assess and address risks proactively Enhance team focus on sprint objectives Track progress regularly during sprints

← →

1. Code Quality Measures the standards of the code written by the developer using metrics like cyclomatic complexity, code churn, and code maintainability index

What good looks like for this metric: Maintainability index above 70

Ideas to improve this metric Conduct regular code reviews Utilise static code analysis tools Adopt coding standards and guidelines Refactor code regularly to reduce complexity Invest in continuous learning and training 2. Deployment Frequency Evaluates the frequency at which a developer releases code changes to production

What good looks like for this metric: Multiple releases per week

Ideas to improve this metric Automate deployment processes Use continuous integration and delivery pipelines Schedule regular release sessions Encourage modular code development Enhance collaboration with DevOps teams 3. Lead Time for Changes Measures the time taken from code commit to deployment in production, reflecting efficiency in development and delivery

What good looks like for this metric: Less than one day

Ideas to improve this metric Streamline the code review process Optimise testing procedures Improve communication across teams Automate build and testing workflows Implement parallel development tracks 4. Change Failure Rate Represents the proportion of deployments that result in a failure requiring a rollback or hotfix

What good looks like for this metric: Less than 15%

Ideas to improve this metric Implement thorough testing before deployment Decrease batch size of code changes Conduct post-implementation reviews Improve error monitoring and logging Enhance rollback procedures 5. System Downtime Assesses the total time that applications are non-operational due to code changes or failures attributed to backend systems

What good looks like for this metric: Less than 0.1% downtime

Ideas to improve this metric Invest in high availability infrastructure Enhance real-time monitoring systems Regularly test system resilience Implement effective incident response plans Improve software redundancy mechanisms

← →

1. Code Quality Measures the frequency and severity of bugs detected in the codebase.

What good looks like for this metric: Less than 10 bugs per 1000 lines of code

Ideas to improve this metric Implement regular code reviews Use static code analysis tools Provide training on best coding practices Encourage test-driven development Adopt a peer programming strategy 2. Deployment Frequency Tracks how often code changes are successfully deployed to production.

What good looks like for this metric: Deploy at least once a day

Ideas to improve this metric Automate the deployment pipeline Reduce bottlenecks in the process Regularly publish small, manageable changes Incentivise swift yet comprehensive testing Improve team communication and collaboration 3. Mean Time to Recovery (MTTR) Measures the average time taken to recover from a service failure.

What good looks like for this metric: Less than 1 hour

Ideas to improve this metric Develop a robust incident response plan Streamline rollback and recovery processes Use monitoring tools to detect issues early Conduct post-mortems and learn from failures Enhance system redundancy and fault tolerance 4. Test Coverage Represents the percentage of code which is tested by automated tests.

What good looks like for this metric: 70% to 90%

Ideas to improve this metric Implement continuous integration with testing Educate developers on writing effective tests Regularly update and refactor out-of-date tests Encourage a culture of writing tests Utilise behaviour-driven development techniques 5. API Response Time Measures the time taken for an API to respond to a request.

What good looks like for this metric: Less than 200ms

Ideas to improve this metric Optimize database queries Utilise caching effectively Reduce payload size Use load balancing techniques Profile and identify performance bottlenecks

← →

1. Test Coverage Measures the percentage of the codebase tested by automated tests, calculated as (number of lines or code paths tested / total lines or code paths) * 100

What good looks like for this metric: 70%-90% for well-tested code

Ideas to improve this metric Increase automation in testing Refactor complex code to simplify testing Utilise test-driven development Regularly update and review test cases Incorporate pair programming 2. Defect Density Calculates the number of confirmed defects divided by the size of the software entity being measured, typically measured as defects per thousand lines of code

What good looks like for this metric: Less than 1 bug per 1,000 lines

Ideas to improve this metric Conduct thorough code reviews Implement static code analysis Improve developer training Use standard coding practices Perform regular software audits 3. Test Execution Time The duration taken to execute all test cases, calculated by summing up the time taken for all tests

What good looks like for this metric: Shorter is better; aim for less than 30 minutes

Ideas to improve this metric Optimise test scripts Use parallel testing Remove redundant tests Upgrade testing tools or infrastructure Automate test environment setup 4. Code Churn Rate Measures the amount of code change within a given period, calculated as the number of lines of code added, modified, or deleted

What good looks like for this metric: 5%-10% considered manageable

Ideas to improve this metric Emphasise on quality over quantity in changes Increase peer code reviews Ensure clear and precise project scopes Monitor team workload to avoid burnout Provide comprehensive documentation 5. User Reported Defects Counts the number of defects reported by users post-release, provides insights into the software's real-world performance

What good looks like for this metric: Strive for zero, but less than 5% of total defects

Ideas to improve this metric Enhance pre-release testing Gather detailed user feedback Offer user training and resources Implement beta testing Regularly update with patches and fixes

← →

1. Feature Completion Rate The percentage of features fully implemented and functional compared to the initial plan

What good looks like for this metric: 80% to 100% during development cycle

Ideas to improve this metric Improve project management processes Ensure clear feature specifications Allocate adequate resources Conduct regular progress reviews Increase team collaboration 2. Planned vs. Actual Features The ratio of features planned to features actually completed

What good looks like for this metric: Equal or close to 1:1

Ideas to improve this metric Create realistic project plans Regularly update feature lists Adjust deadlines as needed Align teams on priorities Open channels for feedback 3. Feature Review Score Average score from review sessions that evaluate feature completion and quality

What good looks like for this metric: Scores above 8 out of 10

Ideas to improve this metric Provide detailed review criteria Use peer review strategies Incorporate customer feedback Holistic testing methodologies Re-evaluate low scoring features 4. Feature Dependency Resolution Time Average time taken to resolve issues linked to feature dependencies

What good looks like for this metric: Resolution time within 2 weeks

Ideas to improve this metric Map feature dependencies early Optimize dependency workflow Increase team communication Utilise dependency management tools Prioritize complex dependencies 5. Change Request Frequency Number of changes requested post-initial feature specification

What good looks like for this metric: Less than 10% of total features

Ideas to improve this metric Ensure initial feature clarity Involve stakeholders early on Implement change control processes Clarify project scope Encourage proactive team discussions

← →

1. Code Coverage Measures the percentage of your code that is covered by automated tests

What good looks like for this metric: 70%-90%

Ideas to improve this metric Increase unit tests Use code coverage tools Refactor complex code Implement test-driven development Conduct code reviews frequently 2. Code Complexity Assesses the complexity of the code using metrics like Cyclomatic Complexity

What good looks like for this metric: 1-10 (Lower is better)

Ideas to improve this metric Simplify conditional statements Refactor to smaller functions Reduce nested loops Use design patterns appropriately Perform regular code reviews 3. Technical Debt Measures the cost of additional work caused by choosing easy solutions now instead of better approaches

What good looks like for this metric: Less than 5%

Ideas to improve this metric Refactor code regularly Avoid quick fixes Ensure high-quality code reviews Update and follow coding standards Use static code analysis tools 4. Defect Density Calculates the number of defects per 1000 lines of code

What good looks like for this metric: Less than 1 defect/KLOC

Ideas to improve this metric Implement thorough testing Increase peer code reviews Enhance developer training Use static analysis tools Adopt continuous integration 5. Code Churn Measures the amount of code that is added, modified, or deleted over time

What good looks like for this metric: 10-20%

Ideas to improve this metric Stabilise project requirements Improve initial code quality Adopt pair programming Reduce unnecessary refactoring Enhance documentation

← →

1. defect density Defect density measures the number of defects per unit of software size, usually per thousand lines of code (KLOC)

What good looks like for this metric: 1-5 defects per KLOC

Ideas to improve this metric Improve code reviews Implement automated testing Enhance developer training Increase test coverage Use static code analysis 2. code coverage Code coverage measures the percentage of code that is executed by automated tests

What good looks like for this metric: 70-80%

Ideas to improve this metric Write more unit tests Implement integration testing Use better testing tools Collaborate closely with QA team Regularly refactor code for testability 3. mean time to resolve (MTTR) MTTR measures the average time taken to resolve a defect once it has been identified

What good looks like for this metric: Less than 8 hours

Ideas to improve this metric Streamline incident management process Automate triage tasks Improve defect prioritization Enhance developer expertise Implement rapid feedback loops 4. customer-reported defects This metric counts the number of defects reported by end users or customers

What good looks like for this metric: Less than 1 defect per month

Ideas to improve this metric Implement thorough user acceptance testing Conduct regular beta tests Enhance support and issue tracking Improve customer feedback channels Use user personas in development 5. code churn Code churn measures the amount of code changes over a period of time, indicating stability and code quality

What good looks like for this metric: 10-20%

Ideas to improve this metric Encourage smaller, iterative changes Implement continuous integration Use version control effectively Conduct regular code reviews Enhance change management processes

← →

1. Release Frequency Measures the number of releases over a specific period. Indicates how quickly updates are being deployed.

What good looks like for this metric: 1-2 releases per month

Ideas to improve this metric Automate deployment processes Implement continuous integration/continuous deployment practices Invest in developer training Regularly review and optimise code Deploy smaller, incremental updates 2. Lead Time for Changes The average time it takes from code commitment to production release. Reflects the efficiency of the development pipeline.

What good looks like for this metric: Less than one week

Ideas to improve this metric Streamline workflow processes Use automated testing tools Enhance code review efficiency Implement Kanban or Agile methodologies Identify and eliminate bottlenecks 3. Change Failure Rate Percentage of releases that cause a failure in production. Indicates the reliability of releases.

What good looks like for this metric: Less than 15%

Ideas to improve this metric Increase testing coverage Conduct thorough code reviews Implement feature flags Improve rollback procedures Provide better training for developers 4. Mean Time to Recovery (MTTR) Average time taken to recover from a failure. Reflects the team's ability to handle incidents.

What good looks like for this metric: Less than one hour

Ideas to improve this metric Establish clear incident response protocols Automate recovery processes Enhance monitoring and alerts Regularly conduct disaster recovery drills Analyse incidents post-mortem to prevent recurrence 5. Number of Bugs Found Post-Release The count of bugs discovered by users post-release. Indicates the quality of software before deployment.

What good looks like for this metric: Fewer than 5 bugs per release

Ideas to improve this metric Enhance pre-release testing Implement user acceptance testing Increase use of beta testing Utilise static code analysis tools Improve requirement gathering and planning

← →

1. Feature Implementation Ratio The ratio of implemented features to planned features.

What good looks like for this metric: 80-90%

Ideas to improve this metric Prioritise features based on user impact Allocate dedicated resources for feature development Conduct regular progress reviews Utilise agile methodologies for iteration Ensure clear feature specifications 2. User Acceptance Test Pass Rate Percentage of features passing user acceptance testing.

What good looks like for this metric: 95%+

Ideas to improve this metric Enhance test case design Involve users early in the testing process Provide comprehensive user training Utilise automated testing tools Identify and fix defects promptly 3. Bug Resolution Time Average time taken to resolve bugs during feature development.

What good looks like for this metric: 24-48 hours

Ideas to improve this metric Implement a robust issue tracking system Prioritise critical bugs Conduct regular team stand-ups Improve cross-functional collaboration Establish a swift feedback loop 4. Code Quality Index Assessment of code quality using a standard index or score.

What good looks like for this metric: 75-85%

Ideas to improve this metric Conduct regular code reviews Utilise static code analysis tools Refactor code periodically Strictly adhere to coding standards Invest in developer training 5. Feature Usage Frequency Frequency at which newly implemented features are used.

What good looks like for this metric: 70%+ usage of released features

Ideas to improve this metric Enhance user interface design Provide user guides or tutorials Gather user feedback on new features Offer feature usage incentives Regularly monitor usage statistics

← →

1. Page Load Time The time it takes for a web page to fully load from the moment the user requests it

What good looks like for this metric: 2 to 3 seconds

Ideas to improve this metric Optimise images and use proper formats Minimise CSS and JavaScript files Enable browser caching Use Content Delivery Networks (CDNs) Reduce server response time 2. Time to First Byte (TTFB) The time it takes for the user's browser to receive the first byte of page content from the server

What good looks like for this metric: Less than 200 milliseconds

Ideas to improve this metric Use faster hosting Optimise server configurations Use a CDN Minimise server workloads with caching Reduce DNS lookup times 3. First Contentful Paint (FCP) The time from when the page starts loading to when any part of the page's content is rendered on the screen

What good looks like for this metric: Less than 1.8 seconds

Ideas to improve this metric Defer non-critical JavaScript Reduce the size of render-blocking resources Prioritise visible content Optimise fonts and text rendering Minimise main-thread work 4. JavaScript Error Rate The percentage of user sessions that encounter JavaScript errors on the site

What good looks like for this metric: Less than 1%

Ideas to improve this metric Thoroughly test code before deployment Use error tracking tools Handle exceptions properly in the code Keep third-party scripts updated Perform regular code reviews 5. User Satisfaction (Apdex) Score A metric that measures user satisfaction based on response times, calculated as the ratio of satisfactory response times to total response times

What good looks like for this metric: 0.8 or higher

Ideas to improve this metric Monitor and analyse performance regularly Focus on optimising high-traffic pages Implement user feedback mechanisms Ensure responsive design principles are followed Prioritise backend performance improvement

← →

1. Deployment Frequency Measures how often new updates are deployed to production

What good looks like for this metric: Once per week

Ideas to improve this metric Automate deployment processes Implement continuous integration Use feature toggles Practice trunk-based development Reduce batch sizes 2. Lead Time for Changes Time taken from code commit to deployment in production

What good looks like for this metric: One day to one week

Ideas to improve this metric Improve code review process Minimise work in progress Optimise build processes Automate testing pipelines Implement parallel builds 3. Mean Time to Recovery Time taken to recover from production failures

What good looks like for this metric: Less than one hour

Ideas to improve this metric Implement robust monitoring tools Create a clear incident response plan Use canary releases Conduct regular disaster recovery drills Enhance rollback procedures 4. Change Failure Rate Percentage of changes that result in production failures

What good looks like for this metric: Less than 15%

Ideas to improve this metric Increase test coverage Perform thorough code reviews Conduct root cause analysis Use static code analysis tools Implement infrastructure as code 5. Cycle Time Time to complete one development cycle from start to finish

What good looks like for this metric: Two weeks

Ideas to improve this metric Adopt agile methodologies Limit work in progress Use time-boxed sprints Continuously prioritise tasks Improve collaboration among teams

← →

1. Response Time The time taken for a system to respond to a request, typically measured in milliseconds.

What good looks like for this metric: 100-200 ms

Ideas to improve this metric Optimise database queries Use efficient algorithms Implement caching strategies Scale infrastructure Minimise network latency 2. Error Rate The percentage of requests that result in errors, such as 4xx or 5xx HTTP status codes.

What good looks like for this metric: Less than 1%

Ideas to improve this metric Improve input validation Conduct thorough testing Use error monitoring tools Implement robust exception handling Optimize API endpoints 3. Request Per Second (RPS) The number of requests the server can handle per second.

What good looks like for this metric: 1000-5000 RPS

Ideas to improve this metric Use load balancing Optimise server performance Increase concurrency Implement rate limiting Scale vertically and horizontally 4. CPU Utilisation The percentage of CPU resources used by the backend server.

What good looks like for this metric: 50-70%

Ideas to improve this metric Profile and optimise code Distribute workloads evenly Scale infrastructure Use efficient data structures Reduce computational complexity 5. Memory Usage The amount of memory consumed by the backend server.

What good looks like for this metric: Less than 85% of total memory

Ideas to improve this metric Identify and fix memory leaks Optimise data storage Use garbage collection Implement memory caching Scale infrastructure

← →

1. Defect Density Measures the number of defects per unit size of the software, usually per thousand lines of code

What good looks like for this metric: 1-10 defects per KLOC

Ideas to improve this metric Implement code reviews Increase automated testing Enhance developer training Use static code analysis tools Adopt Test-Driven Development (TDD) 2. Mean Time to Failure (MTTF) Measures the average time between failures for a system or component during operation

What good looks like for this metric: Varies widely by industry and system type, generally higher is better

Ideas to improve this metric Conduct regular maintenance routines Implement rigorous testing cycles Enhance monitoring and alerting systems Utilise redundancy and failover mechanisms Improve codebase documentation 3. Customer-Reported Incidents Counts the number of issues or bugs reported by customers within a given period

What good looks like for this metric: Varies depending on product and customer base, generally lower is better

Ideas to improve this metric Engage in proactive customer support Release regular updates and patches Conduct user feedback sessions Improve user documentation Monitor and analyse incident trends 4. Code Coverage Indicates the percentage of the source code covered by automated tests

What good looks like for this metric: 70-90% code coverage

Ideas to improve this metric Increase unit testing Use automated testing tools Adopt continuous integration practices Refactor legacy code Integrate end-to-end testing 5. Release Frequency Measures how often new releases are deployed to production

What good looks like for this metric: Depends on product and development cycle; frequently updated software is often more reliable

Ideas to improve this metric Adopt continuous delivery Automate deployment processes Improve release planning Reduce deployment complexity Engage in regular sprint retrospectives

← →

1. Vulnerability Density Measures the number of vulnerabilities per thousand lines of code. It helps to identify vulnerable areas in the codebase that need attention.

What good looks like for this metric: 0-1 vulnerabilities per KLOC

Ideas to improve this metric Conduct regular code reviews Use static analysis tools Implement secure coding practices Provide security training for developers Perform security-focused testing 2. Mean Time to Resolve Vulnerabilities (MTTR) The average time it takes to resolve vulnerabilities from the time they are identified.

What good looks like for this metric: Less than 30 days

Ideas to improve this metric Prioritise vulnerabilities based on severity Automate vulnerability management processes Allocate dedicated resources for vulnerability remediation Establish a clear vulnerability response process Regularly monitor and report on MTTR 3. Percentage of Code Covered by Security Testing The proportion of the codebase that is covered by security tests, helping to ensure code is thoroughly tested for vulnerabilities.

What good looks like for this metric: 90% or higher

Ideas to improve this metric Increase the frequency of security tests Use automated security testing tools Integrate security tests into the CI/CD pipeline Regularly update and expand test cases Provide training on writing effective security tests 4. Number of Security Incidents The total count of security incidents, including breaches, detected within a given period.

What good looks like for this metric: Zero incidents

Ideas to improve this metric Implement continuous monitoring Conduct regular penetration testing Deploy intrusion detection systems Educate employees on security best practices Establish a strong incident response plan 5. False Positive Rate of Security Tools The percentage of security alerts that are not true threats, which can lead to resource wastage and alert fatigue.

What good looks like for this metric: Less than 5%

Ideas to improve this metric Regularly update security tool configurations Train security teams to properly interpret alerts Use machine learning to improve tool accuracy Combine multiple security tools for better context Implement regular reviews of alerts to refine rules

← →

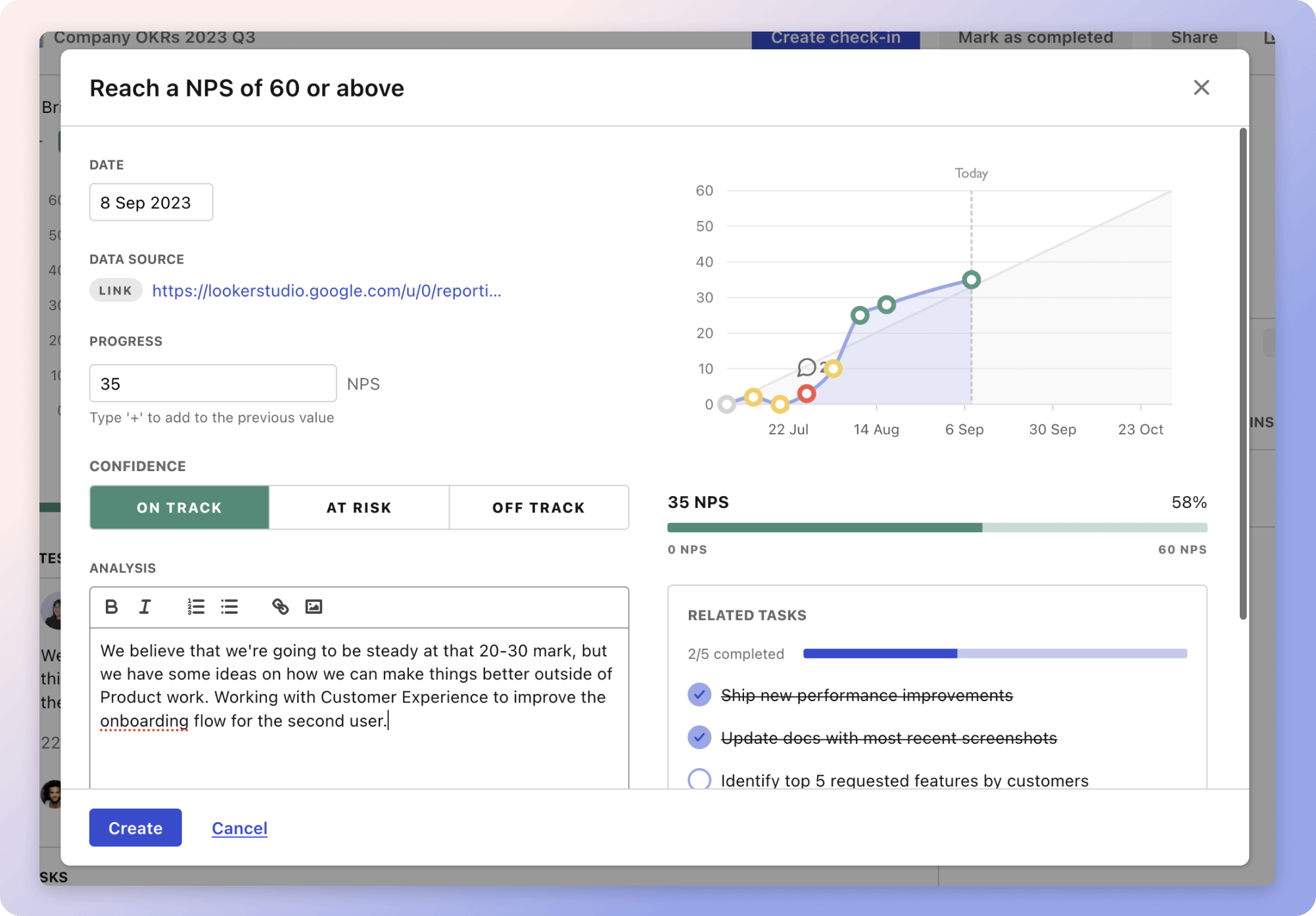

Tracking your Developer metrics Having a plan is one thing, sticking to it is another.

Don't fall into the set-and-forget trap. It is important to adopt a weekly check-in process to keep your strategy agile – otherwise this is nothing more than a reporting exercise.

A tool like Tability can also help you by combining AI and goal-setting to keep you on track.

More metrics recently published We have more examples to help you below.

Planning resources OKRs are a great way to translate strategies into measurable goals. Here are a list of resources to help you adopt the OKR framework:

Tability's check-ins will save you hours and increase transparency

Tability's check-ins will save you hours and increase transparencyThe best metrics for Work Performance Evaluation

The best metrics for Investment Group Success

The best metrics for Showcase Team Performance

The best metrics for Youth Entrepreneurship Training

The best metrics for Support Youth Entrepreneurship

The best metrics for Youth Employability Improvement